Deploying the HPE 3PAR StoreServ Simulator

For several years now, HPE has provided a simulated version of its 3PAR StoreServ storage array available for deployment in virtualized environments. While the simulator does not offer storage services to external devices, it is fully functional in almost all aspects allowing for complete testing of most features and management functions. In this post, we walk through the process to deploy and configure the simulator.

Preparing for Deployment

Before we can begin, we need to download the HPE 3PAR StoreServ Simulator from the My HPE Software Center. For this walkthrough, I’m using version 3.2.2 MU4 of the simulator. The tarball file provided by HPE contains instructions, as well as two OVF templates. One OVF template contains the management node software, while the other includes the virtualized enclosure/disk shelves.

Additionally, each VM deployed for the HPE 3PAR StoreServ Simulator has three virtual NICs. The first NIC of each VM provides management connectivity to the VMs. The second NIC allows connectivity to the Remote Copy network if the feature is required. The third NIC provides communication between the three nodes and requires a private network connection.

Deploying the Management Cluster Nodes

For this walkthrough, the two management node VM hostnames are vLab-3PAR-Node0 and vLab-3PAR-Node1. Because the deployment of the management cluster nodes does not require any input other than necessary infrastructure resource selection, you can follow VMware’s documentation to Deploy an OVF or OVA Template. After the VMs are deployed, configure the networks assigned to each NIC as described above.

Deploying the ESD Node

The ESD node provides for simulation of the HPE 3PAR drive enclosure/cages and hard drives. The simulator uses only one instance of this virtual machine. For this walkthrough, the ESD node hostname is vLAB-3PAR-ESD. Again, because the deployment of the node does not require any input other than necessary infrastructure resource selection, you can follow VMware’s documentation to Deploy an OVF or OVA Template. After the VMs are deployed, configure the networks assigned to each NIC as described above.

Power On and Initial Configuration

The next step in the process is to power on all three virtual machines. Before doing so, I suggest creating a snapshot of all 3 VMs in case you need to revert and start the configuration process again.

Configuring the ESD Node

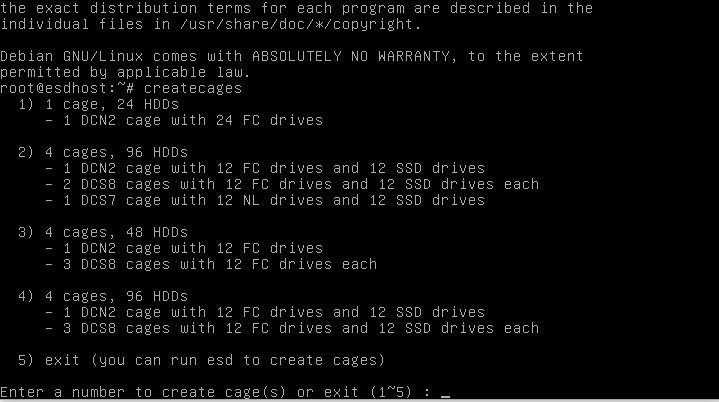

After the VMs complete their boot processes, open a console session to the ESD node. Log into the node using the username root and the password root. Next, we initialize the ESD’s storage configuration by typing the command createcages and selecting from one of the menu options provided.

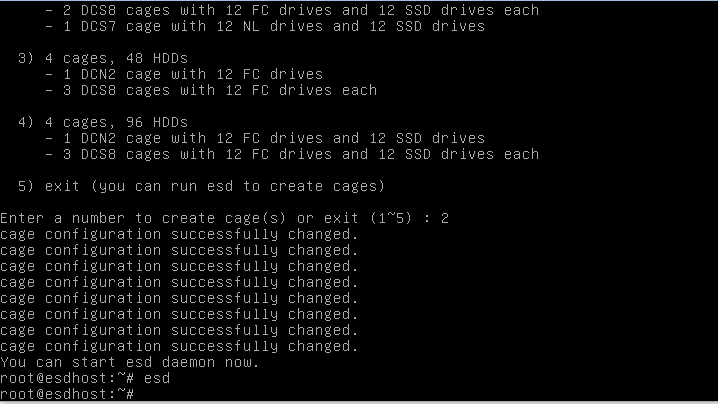

Because I want to have all three drive types (NL, FC, and SSD) in my instance of the simulator, I select option 2. Creation of the cages happens fast, and when complete, you will see several lines on the console stating, “Cage configuration successfully changed.” Next, we start the ESD daemon by executing the command esd. Executing the command will not result in any additional output, so do not be alarmed if it seems like nothing has happened.

Configuring the Cluster Nodes

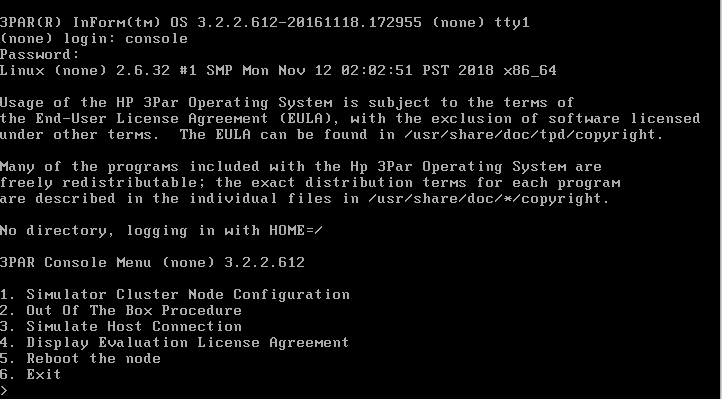

Now that we are finished configuring the ESD node, we need to configure the management cluster nodes. Open a console session to the first node (vLab-3PAR-Node0), and log in with the username console and the password crH72mkr. Next, choose option number 1 titled “Simulator Cluster Node Configuration.”

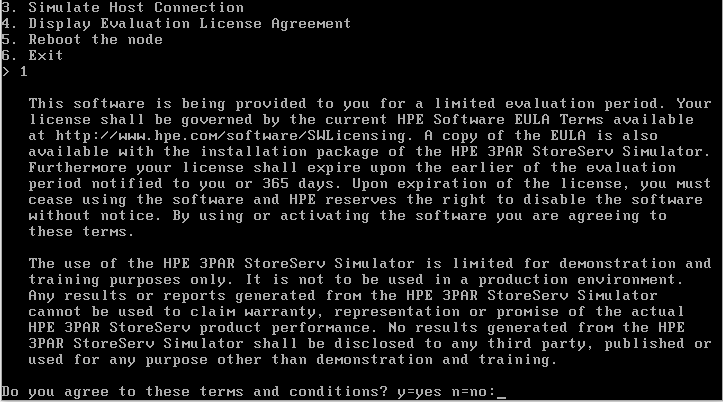

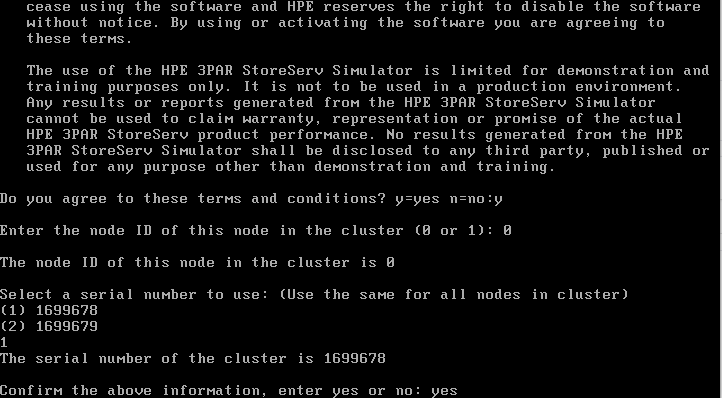

We are presented with a software license agreement where we enter Y to agree to the terms and conditions.

After agreeing to the terms and conditions, the cluster node will prompt us for a “node ID.” Since this is the first node, enter 0. Next, the cluster node will prompt you to select one of two serial numbers. For our purposes, select the first option 1699678. If you deploy a second simulator in the future to test the Remote Copy feature, select the second serial number when configuring that instance so that each device will have a separate serial. Finally, enter yes at the prompt to “Confirm the above information.”

We repeat the same process on the second node (vLab-3PAR-Node1), but instead of inputting “node ID” as 0, we will input the “node ID” as 1. Finally, from the main menu of both nodes, select option 5 labeled “Reboot the node” to reboot the nodes.

Execute the Out of the Box Procedure

The last significant step in the process is for us to complete the “Out of the Box” (OOTB) procedure. This procedure is only executed once from the cluster node configured with the “Node ID” of 0. To start the process, we will log in to cluster node 0 (vLab-3PAR-Node0) using the username console and password crH72mkr. From the resulting menu, we select option 2 labeled “Out of the Box Procedure” to start the procedure. We are first greeted with a screen that states that the “Cluster is in a proper manual startup state to proceed” and asks if we wish to continue. We will enter yes to continue the process.

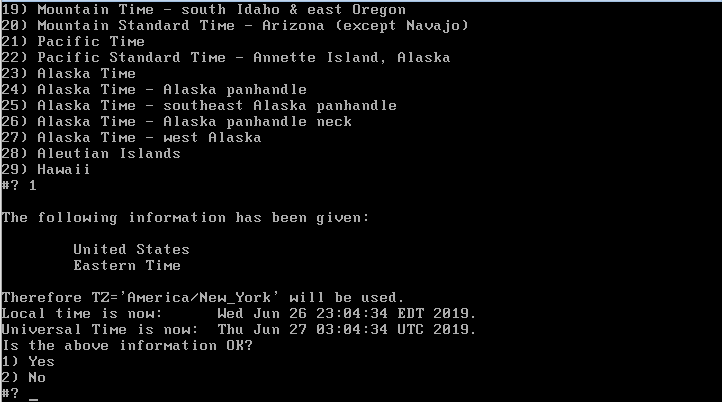

Next, the OOTB process tells is that the cluster has two nodes: “Node 0” and “Node 1”. We enter C into the prompt to continue the process. Next up, we are asked to specify a location to set the proper time zone rules. We choose option 2) Americas, option 49) United States, and then option 1) Eastern (most areas) which results in a timezone value of TZ=‘America/New_York’. We then choose 1) Yes to confirm that the information is OK. You should select the values appropriate to your location.

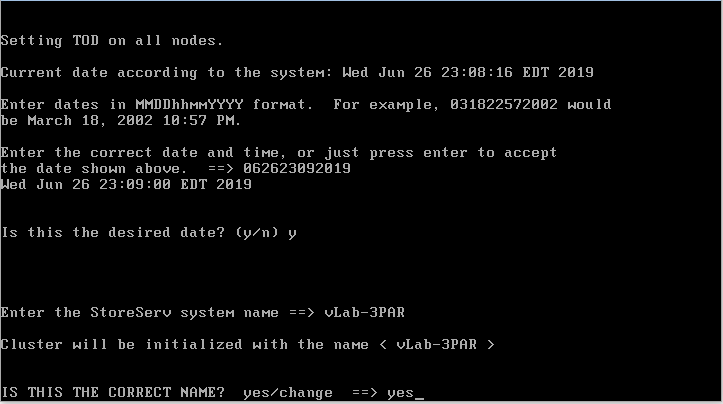

The OOTB process next asks us to confirm the date and time. If the value is correct, just hit the Enter key to continue. In my case, the time was slightly off, so I enter a new value before pressing Enter. After confirming the date and time, the OOTB process will request a system name. For this walkthrough, we use vLab-3PAR. We then input yes to confirm the cluster name.

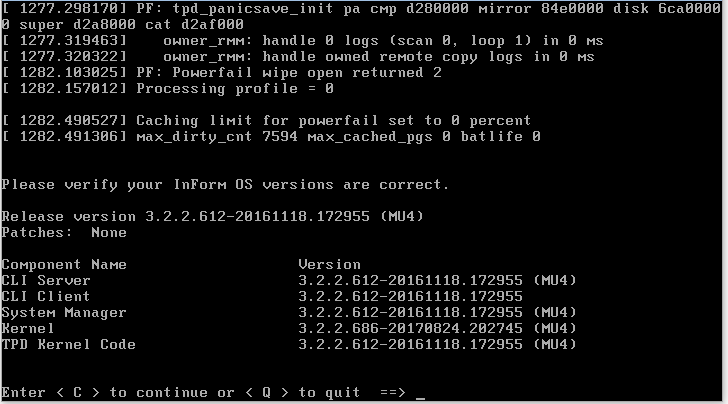

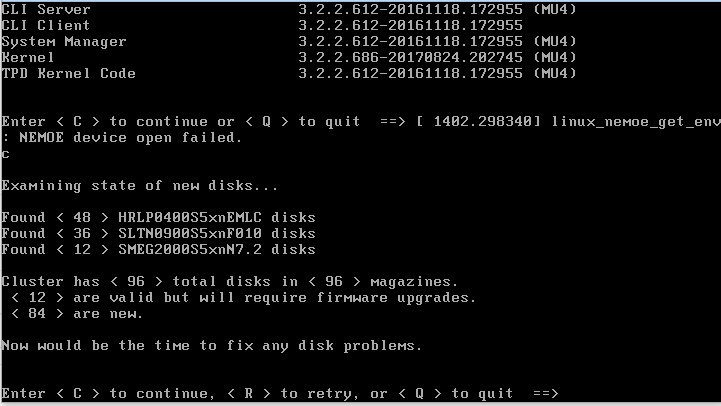

After submitting yes, the cluster initialization process will begin, and then it will ask for you to “verify your InForm OS version are correct.” We enter C to continue the process.

The next output provided by the process is a list of the disk types and counts found. We will enter C again to continue the process.

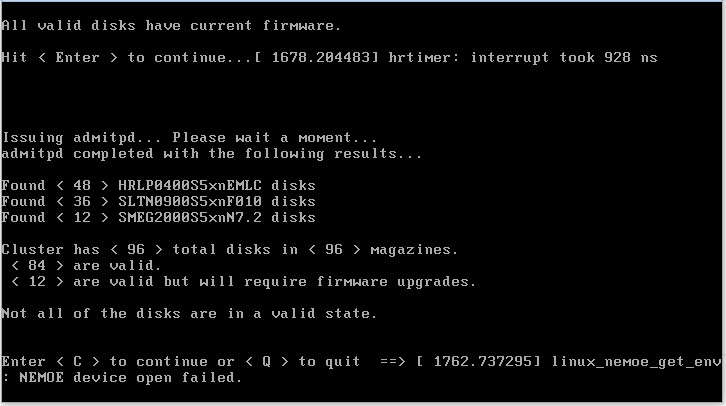

After confirming the disk counts, the process will prompt you to upgrade the firmware on your disks. We input C to continue the process as the simulated drives do not require firmware updates. We are then prompted to hit Enter to continue, and we do so. The cluster node will issue an “admitpd” command and provides us with the results. While the console states that some drives “will required firmware updates,” I confirmed that choosing to upgrade the firmware during the previous step does not change the outcome. We will enter C to continue.

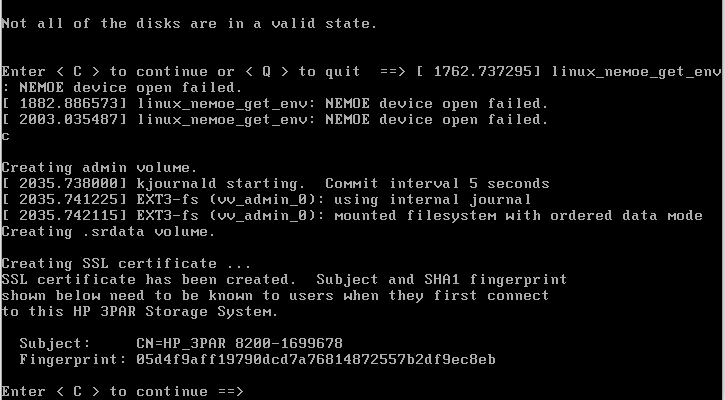

The OOTB process will create a new admin volume and SSL certificates. It then presents us with the Subject and Fingerprint of the certificate. We enter C to continue.

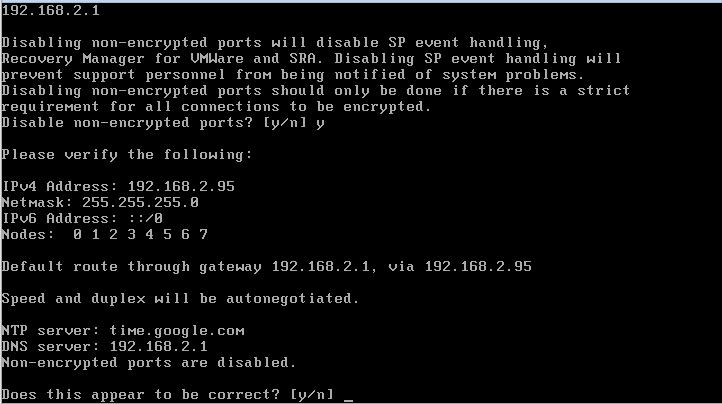

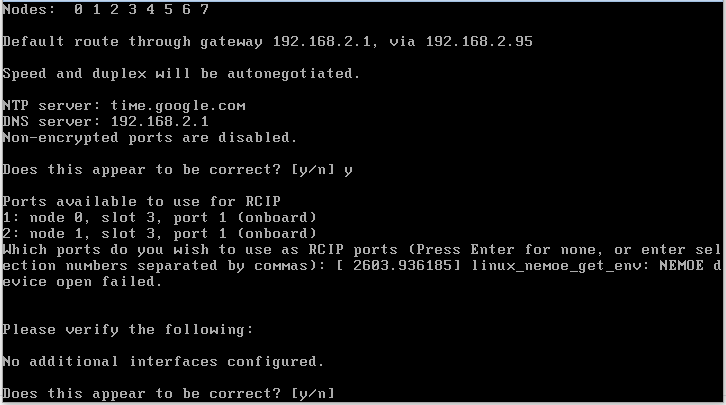

The next step is to provide the cluster with a management cluster IP address. In this exercise, we will only provide an IPv4 address. Thus we will select option 1) IPv4 Address and then enter a new IP address, subnet mask, and gateway for the cluster. When prompted, we enter auto for the interface speed and duplex. When prompted for an NTP Server, we enter time.google.com. Next up, we input our DNS servers. When prompted to “Disable non-encrypted ports,” we enter Y. We confirm the network settings and enter Y to continue.

We are then prompted “Which ports do you wish to use as RCIP ports.” Since we aren’t planning to use the Remote Copy feature, we hit Enter to indicate “none” then Y when asked to confirm.

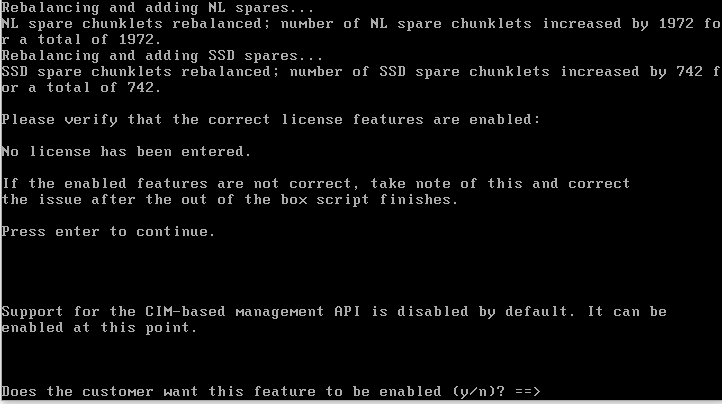

The initialization process continues for a while and then prompts us for input regarding spare chunklet allocation. We enter D for the default setting. Next, we are prompted to confirm the licensed featured. The list will be empty, so we press Enter to continue. For the next question regarding CIM Management, we enter Y.

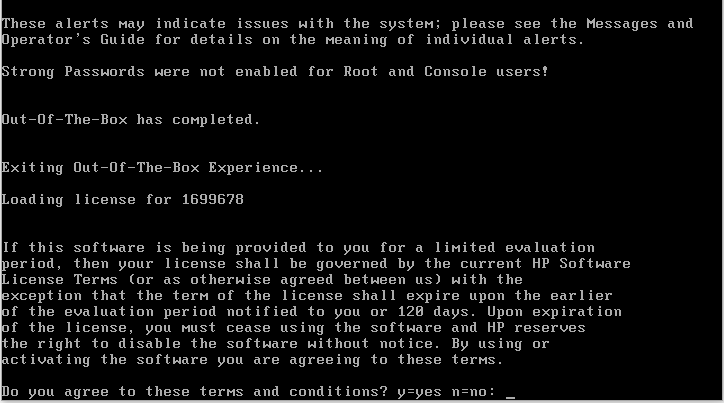

When prompted to confirm the license agreement, we will again enter Y.

That’s the final step. The HPE 3PAR StoreServ Simulator is completely configured and ready for use. If you haven’t already done so, download the HPE 3PAR StoreServe Management Console virtual appliance to begin managing the simulator.

See Also

Search

Get Notified of Future Posts

Recent Posts